TRADING RESOURCES

VIDEOS

Bubbles and Crashes - Planet Finance Documentary

Step into the dynamic and exhilarating realm of finance with "Bubbles and Crashes | Planet Finance", a captivating documentary that takes viewers on a thrilling journey through the highs and lows of the financial markets. From gripping narratives of market scandals to insightful analyses of investor behavior, this documentary offers a fascinating glimpse into the inner workings of the global economy.

But the adventure doesn't stop there! Dive deeper into the world of finance with our curated articles inspired by the documentary. Whether you're a seasoned trader looking to sharpen your skills or an educator seeking engaging resources for your students, our articles have something for everyone.

Discover practical trading strategies, unravel the mysteries of market psychology, and gain valuable insights into the forces that drive financial markets. With our study guides, discussion questions, and lesson plans, you'll have everything you need to enhance your understanding of finance and take your trading game to the next level.

Don't miss out on this exciting opportunity to explore the intersection of finance and storytelling. Join us on a journey through "Bubbles and Crashes | Planet Finance" and unlock the secrets of the financial world. Whether you're a curious beginner or a seasoned investor, there's always something new to learn in the world of finance.

The Intriguing World of Disaster Finance - Documentary

Delve into the captivating world of disaster finance with "Catastrophe for Sale | Planet Finance," a thought-provoking documentary that offers a unique glimpse into the intersection of financial markets and natural disasters. From risk assessment to market dynamics, this documentary unravels the complexities of disaster finance, providing viewers with a deeper understanding of the forces shaping economies in times of crisis.

But the journey doesn't end there. Accompanying the documentary is an insightful article that delves into the educational value of "Catastrophe for Sale | Planet Finance" for traders and investors. Through engaging storytelling and expert analysis, the article explores key themes such as risk management, ethical dilemmas, and market dynamics, offering valuable insights for navigating the turbulent waters of financial markets.

Whether you're a seasoned trader, an aspiring investor, or simply curious about the inner workings of financial systems, this documentary and accompanying article are sure to inform, inspire, and challenge your perspective on disaster finance. So sit back, grab your popcorn, and embark on a journey into the heart of financial markets with "Catastrophe for Sale | Planet Finance" and its accompanying educational resources.

The Biggest IPO Ever: China Steps on the Emergency Brake

Step into the world of high finance with "The Biggest IPO Ever: China Steps on the Emergency Brake," a captivating documentary that delves into the rise and fall of the Ant Group IPO. Join us as we navigate the twists and turns of this gripping saga, exploring the dynamics of supply and demand, the impact of government intervention, and the art of navigating market unpredictability.

In this comprehensive guide for traders and investors, we break down the key insights from the documentary and provide valuable lessons for navigating the complexities of financial markets. From understanding supply and demand dynamics to navigating regulatory risks, we offer practical advice and real-world examples to help you stay ahead of the game.

Whether you're a seasoned trader or a novice investor, this guide will equip you with the knowledge and tools you need to navigate the unpredictable world of finance. So, grab your popcorn and get ready to unravel the Ant Group IPO fiasco – and emerge a smarter, savvier investor.

Fortune Seekers: Japan's Influence on the Forex Markets

Embark on an enlightening journey into the world of forex trading with "Fortune Seekers: Japan's influence on the forex markets documentary". Dive deep into the intricate dynamics of the global currency exchange market as you follow the stories of Japanese retail traders and explore the impact of external events on market trends. This captivating film offers invaluable insights into the psychology of trading, risk management strategies, and the role of key players like institutional investors and central banks. Whether you're a seasoned trader or just starting out, "Fortune Seekers" is a must-watch for anyone looking to enhance their understanding of forex trading. Join us as we unravel the mysteries of the forex market and uncover the secrets to success in this exhilarating documentary experience.

Exploring Oil Trading: Free Oil - Planet Finance Documentary

Embark on an exhilarating journey into the heart of the global oil market with "Free Oil | Planet Finance". This captivating documentary offers viewers a front-row seat to the fast-paced world of oil trading, where fortunes are made and lost in the blink of an eye. Join us as we delve into the key themes, gripping storytelling, and thought-provoking messages that make "Free Oil | Planet Finance" a must-watch for anyone intrigued by finance and economics.

With its seamless blend of interviews, visuals, and real-time market data, "Free Oil | Planet Finance" takes viewers on a rollercoaster ride through the highs and lows of oil trading. From the bustling streets of New York City to the quiet plains of Cushing, Oklahoma, the documentary offers a comprehensive look at the global oil market and the individuals who shape it.

Experience the adrenaline rush of trading alongside seasoned professionals and unsuspecting day traders alike. Gain insights into the ethical dilemmas of speculation, the impact of market dynamics on the global economy, and the personal toll that trading can take on individuals.

Whether you're a seasoned trader or simply curious about the inner workings of the financial world, "Free Oil | Planet Finance" is sure to leave you spellbound. So, grab your popcorn and buckle up for a thrilling adventure into the wild waves of oil trading.

Nature of the Beast: A Deep Dive into the Financial Universe

Dive into the intricate world of global finance with "Nature of the Beast | Planet Finance" Documentary. This captivating documentary offers a fascinating exploration of the evolution of financial markets, from bustling trading floors to the high-tech realm of algorithmic trading. Discover the forces shaping our modern economy and gain valuable insights into the fast-paced world of investing and trading. Whether you're a seasoned investor or a curious learner, this engaging content promises to educate and inspire. Join us on an eye-opening journey through the heart of the financial universe.

Forex Mastery: Multimillionaire Trader’s Journey - Documentary

Embark on a captivating journey into the world of forex trading with our exclusive content, “Mastering Forex: Insights from Jeff's Multimillionaire Journey”. Delve into a comprehensive analysis of Jeff's life, trading strategies, and mindset, as showcased in the documentary. Gain valuable insights for navigating the complexities of forex trading, uncovering the secrets to success from a seasoned multimillionaire trader. This content serves as a definitive guide for traders, offering a unique blend of in-depth analysis and practical lessons. Elevate your trading acumen and learn to master the forex markets with Jeff's invaluable experience. Explore the highs, lows, and triumphs of a true forex expert in this SEO-friendly and informative content.

Cryptopia: Bitcoin, Blockchains and the Internet Future

Dive into the heart of the digital revolution with “Cryptopia Unveiled: Bitcoin, Blockchain, and the Internet's Next Frontier”. This comprehensive exploration delves into the intricate world of cryptocurrencies, spotlighting the enigmatic Satoshi Nakamoto and unraveling the genius behind Bitcoin. Discover how blockchain technology has evolved from its humble beginnings to reshape the landscape of the internet, paving the way for Web 3.0.

Explore the challenges and controversies that have defined the crypto landscape, shedding light on the multifaceted aspects of this transformative technology. From decentralized currencies to the expansive potential of blockchain in the digital age, this article navigates through the dynamic and sometimes controversial developments in the crypto space.

Meet key figures who have played pivotal roles in shaping the future of blockchain technology, from visionaries to controversial figures, and understand the intricate dynamics of leadership within the crypto realm. Uncover the unique leadership style of Bitcoin, a faceless force that continues to reshape the digital landscape.

In conclusion, "Cryptopia Unveiled" is a manifesto for the digital revolution, offering insights into the decentralized future that awaits. Join us on this journey through the world of cryptocurrencies, blockchain, and the Internet's next frontier.

The Corporation Documentary: Unmasking Corporate Impact

“The Corporation” is a compelling and thought-provoking documentary directed by Mark Achbar and Jennifer Abbott. Released in 2003, the film meticulously dissects the multifaceted nature of corporations in modern society. Through expert interviews, animations, and case studies, it examines the historical evolution of corporations from legal entities to dominant forces. The documentary raises questions about their ethical responsibility and unrelenting pursuit of profit, often at the expense of environmental and social well-being. Balanced in its approach, the film features insights from both corporate insiders and critics, shedding light on conflicting viewpoints. It presents case studies highlighting the environmental and social impacts of corporate actions, urging viewers to consider the broader implications. “The Corporation” also delves into regulatory gaps and corporate influence over politics, emphasizing the need for transparency and ethical business practices. In essence, “The Corporation” serves as a compelling call for heightened awareness, accountability, and responsible corporate governance. It prompts audiences to critically assess the role of corporations in shaping the world and encourages a more balanced and ethical approach to business. Through its engaging storytelling and insightful content, the documentary provokes thoughtful reflection and fosters discussions about corporate power and its impact on society.

Trading Realities: Unveiling the Global Commodity Market

“Behind Closed Doors: Commodity Traders, How It Works” is a documentary that delves into the intricate and often secretive world of commodity trading. The film provides an in-depth exploration of the role and influence of commodity traders in global markets, shedding light on their practices, motivations, and impact on economies, societies, and the environment. The documentary takes viewers behind the scenes of this complex industry, revealing the mechanisms that drive the buying, selling, and speculation of essential goods such as food, energy, and raw materials. Through interviews with experts, traders, and industry insiders, the film offers insights into the strategies employed by traders to predict price fluctuations and capitalize on market trends. The narrative highlights the historical evolution of commodity trading, tracing its origins from traditional marketplaces to the modern financial markets, such as the Chicago Board of Trade. It examines how these markets have become centers of speculation, where traders make bets on the future prices of commodities, often with significant financial implications. Throughout the documentary, viewers are introduced to key figures in the commodity trading world, including successful traders and major corporations involved in the industry. The film showcases real-world examples of trading successes and failures, illustrating the potential rewards and risks that come with this high-stakes profession. It also addresses the ethical and social implications of commodity trading. It explores how trading practices can impact vulnerable populations, such as small farmers and communities in developing countries, and how the pursuit of profits can sometimes conflict with broader societal needs, such as food security. Overall, the documentary offers a thought-provoking examination of commodity trading, inviting viewers to consider the complexities and consequences of a global system driven by financial speculation and the quest for profit.

Four Horsemen Documentary

“Four Horsemen” is a compelling and thought-provoking documentary that unveils the hidden forces orchestrating our world’s economic landscape and posing significant threats to our collective future. With a captivating blend of insightful analysis and passionate storytelling, the film casts a critical eye on the global economic system, exposing the manipulations and injustices perpetuated by a powerful few who exploit the masses and ravage our planet for their own gain. Through in-depth interviews with a diverse range of esteemed thinkers, economists, and activists, “Four Horsemen” unearths the intricate web of interconnected issues plaguing our society. It delves into the pervasive influence of financial institutions, the corrosive effects of income inequality, and the profound consequences of an economy built on unsustainable debt. By examining these underlying factors, the documentary sheds light on the dire social and environmental crises we face today. Yet, amidst the alarming revelations, “Four Horsemen” offers a glimmer of hope. It presents visionary alternatives and pragmatic solutions that challenge conventional wisdom and inspire collective action. By reimagining our values and embracing models rooted in justice and sustainability, the film advocates for a transformation of our economic paradigm. Through its powerful narrative, “Four Horsemen” awakens viewers to the urgent need for change and empowers them to question the status quo. It encourages a deep examination of our societal priorities and sparks conversations that have the potential to shape a more equitable, resilient, and compassionate future. This documentary is a rallying cry to individuals and communities worldwide, reminding us that the time to act is now, before the consequences become irreversible.

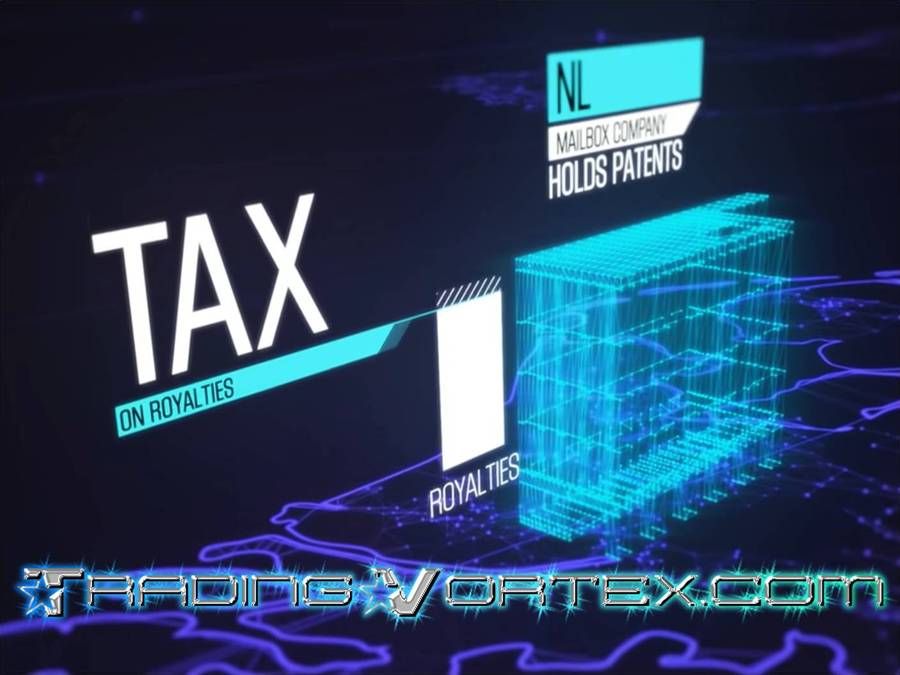

The Tax Free Tour - VPRO Documentary

“The Tax Free Tour” is a documentary produced by VPRO, a Dutch public broadcaster. Released in 2013, the film investigates the issue of tax avoidance by multinational corporations. It aims to uncover the strategies used by these companies to minimize their tax liabilities and explores the impact of these practices on society and economies worldwide. The documentary features interviews with experts, economists, and journalists who provide insights into the complex world of tax avoidance. They discuss various methods employed by multinational corporations to shift profits to low-tax jurisdictions, exploit loopholes, and utilize offshore tax havens. The film examines how these practices affect tax revenues, income inequality, public services, and the overall fairness of the tax system. By delving into the intricate web of tax avoidance, "The Tax Free Tour" aims to raise awareness about the issue and prompt discussions on the need for reforms in tax policies and global economic governance. It provides viewers with a deeper understanding of the mechanisms behind corporate tax avoidance and encourages critical thinking about its implications.

Flash Crash 2010: Unveiling the Dark Side of Automated Trading

“Flash Crash 2010” is a captivating documentary that delves into the May 6th, 2010, stock market plunge known as the Flash Crash. Through exclusive interviews and immersive data visualizations, the film offers a unique perspective on this unprecedented event, shedding light on the inner workings of high-frequency trading and the risks it poses to our financial system. Directed by renowned filmmakers, the documentary explores the hidden dangers and ethical implications of relying on automated trading systems, prompting critical questions about market stability and the role of regulators. Prepare to be enthralled as “Flash Crash 2010” uncovers the untold story behind this historic crash and challenges our perception of the modern financial landscape.

To Catch a Trader Documentary - The Hedge-Fund King Story

“To Catch a Trader” is a gripping documentary that explores the world of high-frequency trading (HFT) and its impact on the financial industry. Produced by PBS (Public Broadcasting Service), the film provides a fascinating look at the sophisticated algorithms and computer programs that enable traders to make lightning-fast trades in financial markets. Through interviews with industry experts, regulators, and academics, the film raises important questions about the fairness and transparency of financial markets, as well as the potential for market manipulation, insider trading, and other illegal practices associated with HFT. The documentary also delves into the investigation of Navinder Singh Sarao, a British trader who was arrested in 2015 for his role in the "flash crash" of 2010, and its wider implications for the financial industry. In addition, the film examines the involvement of Steve Cohen's hedge fund, SAC Capital, in the insider trading scandal that rocked the financial industry and ultimately resulted in a $1.8 billion settlement with the U.S. government. Overall, "To Catch a Trader" is a thought-provoking and illuminating documentary that sheds light on the complex world of high-frequency trading and its impact on the financial industry.

The Ascent of Money Documentary - All Episodes

“The Ascent of Money” is a documentary series that explores the history and evolution of money, finance, and economics. Produced in 2008 by Channel 4 in the UK and PBS in the US, and based on the book of the same name by Niall Ferguson, this six-part series takes the viewer on a journey through time, examining the various financial systems and innovations that have shaped human history. Each episode, such as “Dreams of Avarice”, “Human Bondage”, “Blowing Bubbles”, “Risky Business”, “Safe as Houses”, and “Chimerica”, focuses on a different aspect of finance and economics, providing a historical overview of the topic and using interviews, archival footage, and dramatic reenactments to bring the stories to life. Through this series, viewers can gain unique insights into the economic and political factors that drove these developments, and the lessons that can be learned from them.

Meltdown: The Secret History of the Global Financial Collapse

It travels the world - from Wall Street to Dubai to China - to investigate the secret history of the global financial collapse. It tells the story of the bankers who crashed the world, the leaders who struggled to save it, and the ordinary families who got crushed. CBC's Terence McKenna takes viewers behind the headlines and into the backrooms of the highest levels of world governments and banking institutions, revealing the astonishing level of backstabbing and tension behind the scenes as the world came dangerously close to another Great Depression. It also tells the stories of desperate U.S. homeowners facing foreclosure, disillusioned Ontario autoworkers, and furious employees in France who kidnapped their own bosses. Since the financial meltdown began, trillions of dollars have been spent rescuing banks and jumpstarting economies, yet recovery remains fragile. The millions around the world who lost homes and jobs are demanding answers: How did it all go so wrong? Who is to blame? They are still waiting for answers, because to date, only a few small-time players have been held to account. No major banking, regulatory or government figures have yet been convicted of any wrongdoing.

Million Dollar Traders - BBC Reality TV Series

Million Dollar Traders Documentary is a British reality television series (2009) designed by hedge fund manager Lex van Dam, who attempted to recreate the famous Turtle Traders experiment designed by Richard Dennis in the 1980s. Eight regular people get a million dollars, fifteen days of intensive training and two months to run their own hedge fund. Hedge fund manager Lex van Dam wants to see if they Can make a killing and beat the professionals. The experience reveals the inner workings of a city trading floor. The money is provided by hedge fund manager Lex Van Dam: he expects a return on his investment. Yet no one foresees the financial crisis that awaits them. The traders were selected in the spring of 2008, before the US credit crisis accelerated. Successful candidates were selected, trained and sent to their specially created trading room in the heart of the Square Mile. Among them are an environmentalist, a military man, a boxing promoter, an entrepreneur, a retired computer consultant, a veterinarian, a student and a shopkeeper.

Quants: The Alchemists of Wall Street - VPRO Documentary

Quants: The Alchemists of Wall Street is a documentary that explores the world of quants, the mathematical wizards and computer programmers who design the financial products and algorithms that shape the global financial system. The documentary reveals how the quants have created a complex and risky model-based financial system that is difficult to control and understand, and how they are now at the forefront of another technological revolution in finance: trading at the speed of light using high-frequency trading and artificial intelligence. The documentary features interviews with some of the leading quants in the industry, such as Paul Wilmott, Emanuel Derman, David Harding, and James Simons, who share their insights, opinions, and experiences on the role and impact of quants in the financial markets. The documentary also examines the challenges and dangers of relying on mathematical models to quantify human (economic) behavior, and the ethical and moral implications of using technology to manipulate and influence the economy. The documentary raises important questions about the nature and limits of quantification, the role and responsibility of quants in society, and the future of finance in the age of machines. Quants: The Alchemists of Wall Street is a fascinating and informative documentary that offers a rare glimpse into the arcane and secretive corner of Wall Street and global finance.

How To Change Your Career - Documentary

Build the confidence to change your career through this six-part series of films, where those with the courage to do it share their insights with the FT's work and careers writer Emma Jacobs. In part one, Emma meets two entrepreneurs who gave up climbing the corporate ladder to go it alone. Part two looks at how to leave a well-paid career in finance to turn a hobby into a steady job. In part three, Emma meets a former senior executive who left behind a high-powered career to retrain as a teacher. Part four is all about redundancy and how to bounce back when it feels like your world is falling apart. In part five, Emma learns how to launch a new career after spending several years at home with children. And part six examines whether retraining as a coder is all it is cracked up to be.

Money, Power and Wall Street - FRONTLINE Documentary

The special FRONTLINE investigation: Money, Power and Wall Street Documentary, tells the inside story of the struggles to rescue and repair a shattered economy, exploring key decisions, missed opportunities, and the unprecedented and uneasy partnership between government leaders and titans of finance that affects the fortunes of millions of people around the world.

TradingVortex.com® 2019 © All Rights Reserved.

TradingVortex.com® 2019 © All Rights Reserved.